It's Saturday morning, and you have no plans. Perhaps your schedule happened to line up that way, perhaps the kids are gone, or maybe it's just luck. Regardless, it's glorious. You bask in the glow of a lazy morning while you decide just how you're going to spend today. In your mind you go over different ideas, considering each and its consequences.

You could go to the beach! No, too much effort. You could stay in and read a book, but it's a nice day. You could read outside. Perhaps though it's better to go for a long walk first, grab a coffee maybe? You need to grab some things at the store, and that's on the way. Eventually you arrive at a plan: walk to get coffee, read for a while at the café, then stop by the store on the way home! An excellent plan—efficient and relaxing!

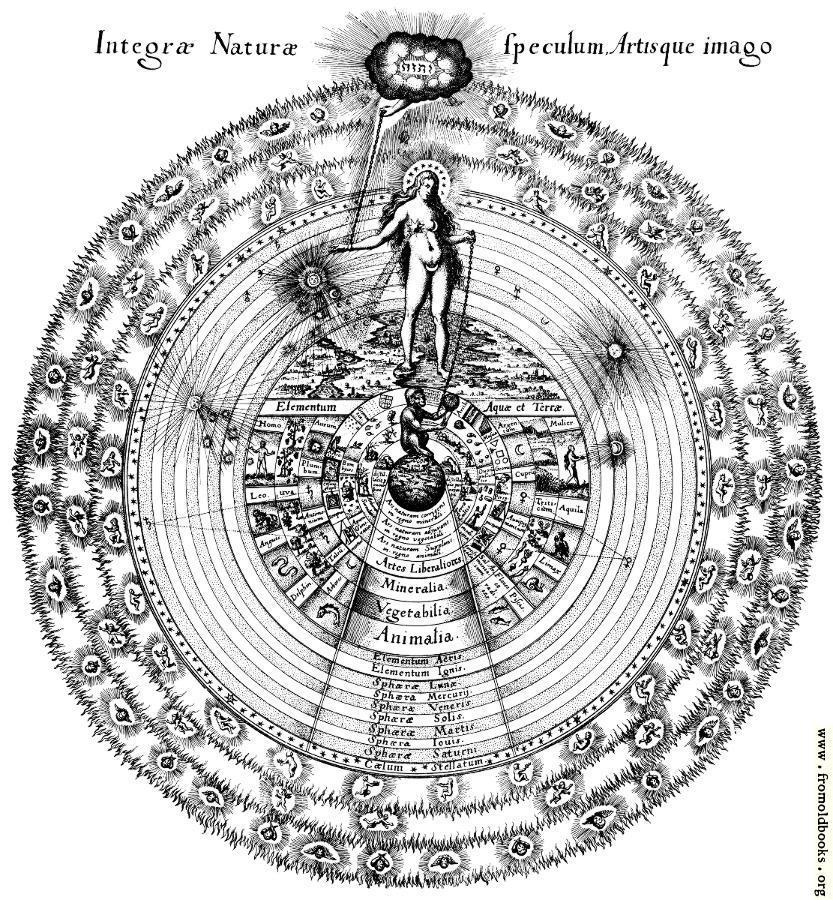

What have you just done? How did you arrive at this decision? There were so many variables, a nearly infinite possibility space, yet you managed to make do. Perhaps Nature itself is not so dissimilar.